The $10,000 Microwave: Why Your "Automated Agency" is a House of Cards

I recently sat through a presentation for a "revolutionary" new automation solution designed to handle client deliverables at scale. The promise was alluring: minimize human labor, maximize output, and let the AI do the heavy lifting.

But as the technical lead started listing the stack, my "engineering dread" didn't just tingle — it screamed.

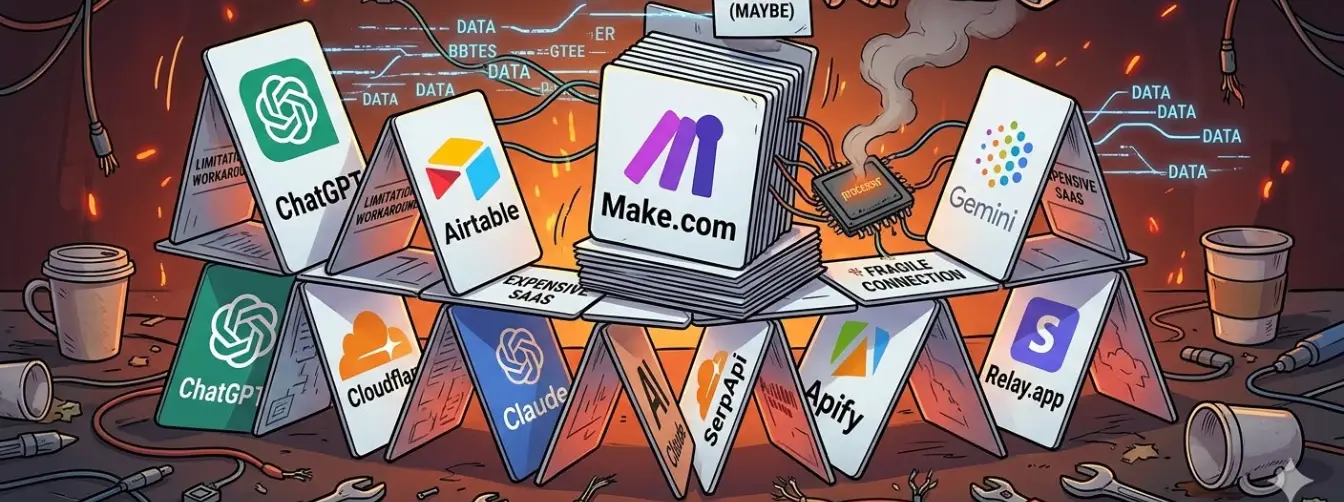

The setup they proudly presented was a grotesque Rube Goldberg machine of disconnected tools, held together by SaaS subscriptions and blind hope:

- Airtable — as the UI

- Make.com — as the orchestrator

- Cloudflare — for webhooks

- Relay.app — for "avatars"

- SerpApi — for search data

- Apify — for scraping

- Gemini, ChatGPT, and Claude — all running simultaneously

This isn't a technical solution. This is what happens when you let marketing gurus design software architecture without consulting a single experienced engineer.

The Problem: Weaving an Impossible Blanket

Whenever I asked "Why?" during the presentation — "Why this expensive intermediary tool?", "Why this extra database layer?" — the answer was almost always:

"Because it was easier to set up in Make."

Or worse:

"Because Make had a limitation, so we needed this workaround."

When your architectural choices are dictated by the limitations of your "ease-of-use" tool, you aren't building a solution. You are building technical debt. You are building a system whose primary requirement is "please don't break the Make scenario."

Every SaaS in the chain is a point of failure. Every webhook is a prayer. Every "sync" operation is a data consistency gamble.

The "Avatar" Trap: Why One Agent Replaces Three Subscriptions

The most glaring example was their use of Relay.app to build "Client Avatars." To a non-engineer, "Persona Synthesis" looks like magic. To an architect, it looks like a redundant tax on your workflow.

They were paying for a UI to do something an LLM is natively designed for: Structured Synthesis.

When you use an external tool for this, you're adding latency, cost, and fragility — for zero additional capability.

The Engineered Approach: The "Persona Synthesis" Skill

Instead of sending data out to another SaaS, we build a Custom Agent with a specialized Skill. This agent doesn't "write bios." It transforms raw research data into a deterministic JSON object that every other part of your system can read.

1. Define the Schema (The Blueprint)

First, we define exactly what an "Avatar" is. We don't guess. We use code to ensure the output is always 100% compatible with our content pipeline:

// src/types/persona.ts

export interface ClientAvatar {

brandVoice: "Professional" | "Conversational" | "Technical";

targetPainPoints: string[];

forbiddenKeywords: string[];

internalJargon: Record<string, string>;

competitorFocus: string[];

}

2. The Agent "Skill" Implementation

We then give our Architect Agent the following instruction set. It doesn't need a "no-code" block — it needs a clear protocol:

- Skill:

Synthesize_Avatar - Input: Scraped data from the client's "About Us" and "Services" pages

- Process: Extract core value propositions and linguistic markers

- Validation: Cross-reference against the

ClientAvatarinterface - Output: A single, minified JSON string ready for the "Writer" agent's system prompt

3. Why This Destroys the "Make + Relay" Setup

When you keep this logic inside a Custom Agent:

- Zero Integration Latency — You aren't waiting for a webhook to travel from Make to Relay and back. It happens in the same execution thread

- Deterministic Quality — Since the Agent is constrained by your TypeScript schema, it can't "hallucinate" a persona that doesn't fit your database

- Contextual Memory — The Avatar becomes part of the Agent's Metadata. When you ask the Agent to "Write a blog post," it already is the persona. You don't have to "send" the avatar to it — it lives inside the agent's definition

The "Automation Expert" will tell you that you need Relay.app because "it's built for this." They are wrong. It is built for people who can't write a schema.

By implementing this as a Custom Skill within a unified Next.js/Claude architecture, you eliminate:

- The Relay.app subscription ($$$)

- The Make.com "Operation" costs for the sync ($)

- The maintenance of the API bridge between them ($$$)

You get a faster, cheaper, and infinitely more stable "Avatar" that actually understands the business — because you defined the rules, not a third-party template.

The Technical Takedown: SaaS Frankenstein vs. Clean Engineering

| Feature | The SaaS Frankenstein | The Clean Stack |

|---|---|---|

| Orchestration | Make.com — limited by "blocks," high per-op cost | Node.js/Go — infinite logic, zero cost per execution |

| Avatars | Relay.app — expensive, external, disconnected | Custom Skill — integrated, deterministic JSON |

| Interface | Airtable — a slow spreadsheet masquerading as a UI | Next.js — fast, branded, optimized for client workflows |

| Maintenance | Extreme: 8+ points of failure, high "SaaS Janitor" time | Low: centralized code, standardized error handling |

| Total Cost | $$$$$ — cascading monthly subscriptions | $ — pay only for the raw API tokens you use |

The Right Way: A Modern Engineering Solution

An experienced engineer wouldn't play tech stack bingo. They would design a small, deterministic system:

- Modern Interface (Next.js): A clean dashboard for managing clients and keywords

- Microservices: Specialized services for scraping (SerpApi) and LLM orchestration

- Claude 3.5 Sonnet (with Skills): A single Agent configured with specific Tools

This Agent doesn't just "write." It executes a secure workflow:

// The agent's execution pipeline

const pipeline = async (client: ClientAvatar, keyword: string) => {

// Step 1: Research

const searchData = await tools.scrape_search_intent(keyword);

// Step 2: Analysis

const gaps = await skills.analyze_content_gaps(searchData, client);

// Step 3: Generation — the Agent IS the persona

const article = await skills.generate_content(gaps, client);

return article; // Schema-validated, optimized, on-brand

};

Three steps. One codebase. Zero "sync" operations. Zero prayers.

The Verdict: 1/10th the Time, 1/100th the Debt

The "SaaS Frankenstein" solution is a $10,000 microwave. When the frozen pizza doesn't fit, the consultant will tell you to buy a second microwave.

The engineered solution is a professional kitchen. It's built in 1/10th of the time because you aren't fighting "No-Code" limitations. It costs 1/10th as much because you aren't paying for "convenience" UIs. And it has 1/100th the maintenance cost because you actually own the logic.

The moral of the story? Do not design a kitchen without consulting a chef. In professional software, "Easy" is an expensive illusion.